Data science

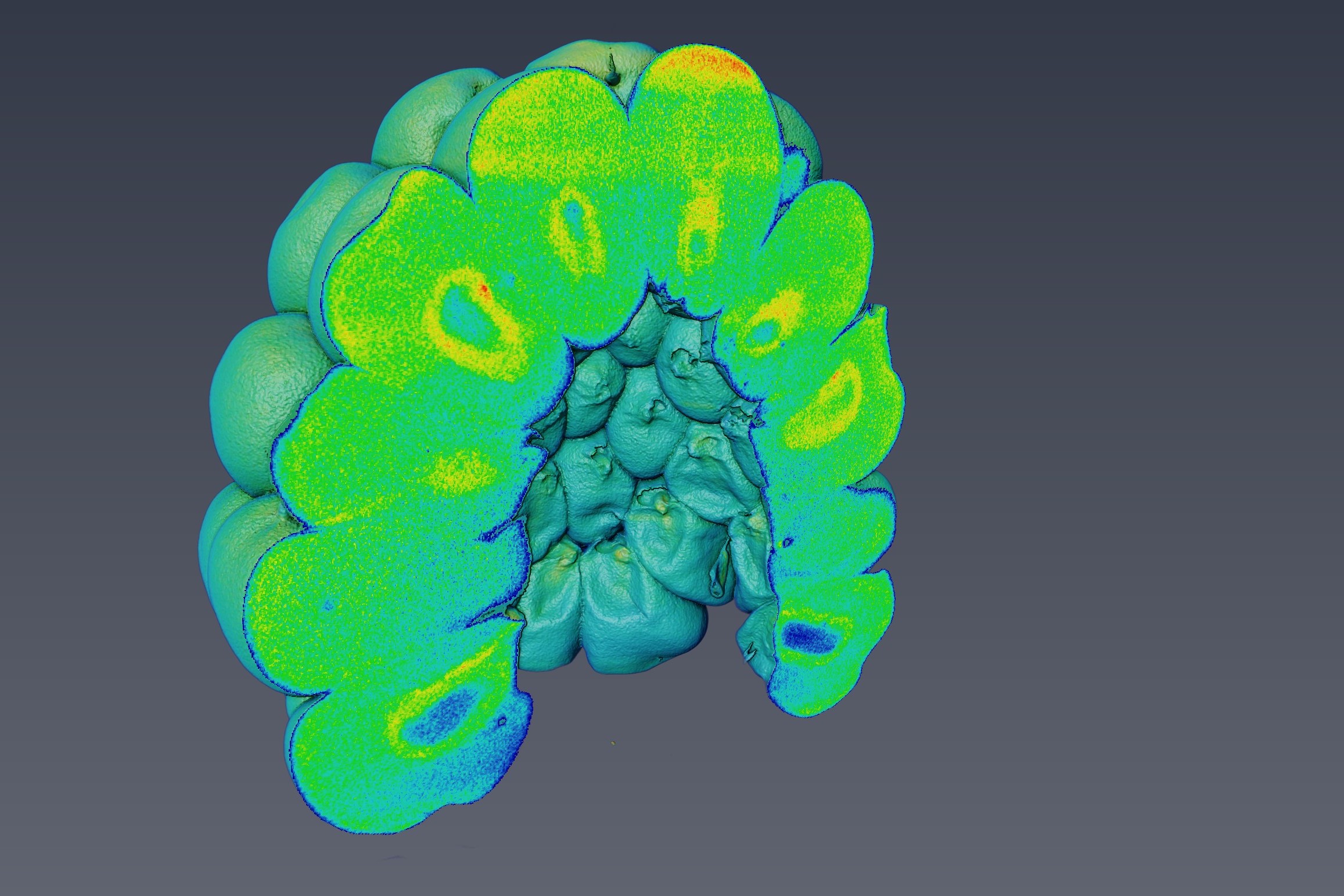

Analyzing and interpreting the data from the largescale facilities often take 100 times longer than the data collection itself. With the even larger amounts of data from the next generation of sources, including ESS and MAX IV, the existing approach to data analysis will not be able to keep up. Similarly, computing power is also the biggest limitation in relation to 3D modeling. One approach that has great potential to simplify data analysis is to use data-driven end-to-end analysis methods: a game-changer in many other fields. The advantage is the simplicity, as model parameters are learned from data, which gives less need to design advanced algorithms for specific problems. However, these methods require quite large amounts of training data. Another approach takes advantage of the fact that many material systems have strong geometric priors, which is often used in simulated models. The aim is to investigate how this is coupled to segmentation of volumetric images, thus exploring the combination of probabilistic segmentation techniques with physically based models of material structures. The combination of strong competencies within materials systems, modeling and data analysis makes SOLID a unique environment for this research.

To ensure that data from the new sources are converted into quantitative measurements or used in 3D simulations, the approach to data analysis must be rethought. This is precisely the basis for QIM, which was established in 2018. Here, the goal is to ensure a structured approach to data analysis through software pipelines as well as training and collaboration with users.